|

| Planning |

Others have already reported on some of the incredible work at the hackfest including the bold new Epiphany redesign, accessibility support for WebKit2, and JHBuild-ification. I'd like to talk a bit about something in which I'm personally involved: accelerated compositing.

|

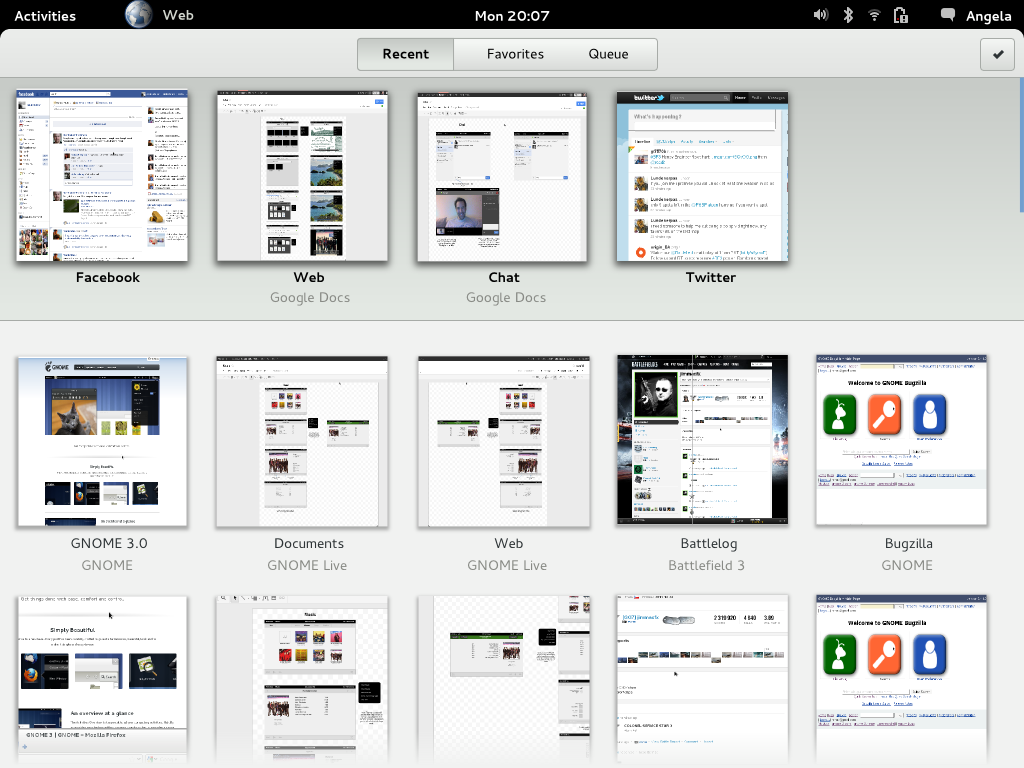

| Epiphany's new look |

In the context of WebKit, drawing state is the rendered page itself, with all its layered divs, canvas elements, video tags and WebGL contexts. WebKit stores elements to render in a data structure called the "render tree." Members of the the render tree which are drawn in main memory must be sent to the GPU to be displayed on the screen. Furthermore, some of these elements may be rendered on the GPU itself, so ideally we want to avoid copying them to main memory only to copy them back to the GPU at a later stage.

CPUs are very fast, so it's often sufficient to rasterize things into main memory. On the other hand, when the entire page is rendered by the CPU, it spends a lot of time doing "boring" tasks that the GPU loves doing, such as compositing (blending images into each other). Accelerated compositing attacks this by slicing the page into layers of related content. The way this slicing works is a heuristic, but generally speaking divs at the same z-index, with the same CSS transform, WebGL contexts and hardware accelerated video are their own layer. It renders each of the non-GPU layers into an image and then uploads all the images to be composited by the GPU along with layers that are already there.

Collabora, which created the Clutter port of WebKit has an implementation of accelerated compositing using Clutter. At the hackfest, Joone Hurr and Gustavo Noronha Silva started integrating this version into WebKitGTK+. The nice thing about the Clutter implementation is that it's almost complete. The unfortunate thing is that Clutter uses COGL which has very poor support for using raw OpenGL in the application. Obviously this means that the WebKitGTK+ port would need to rewrite it's WebGL backend. Igalia is also working on two implementations (OpenGL and the Cairo fallback) using No'am Rosenthal's very nice TextureMapper abstraction.

During the hackfest, we decided to attempt to integrate all three approaches into the tree. The Clutter version is a nice stopgap for people who want to play with accelerated compositing or are using Clutter already. The intial parts of this implementation should be landing soon. Over the next few months we'll also be submitting OpenGL and Cairo versions of accelerated compositing. We already made great progress at the hackfest. If you're interested in following the work, you can follow the accelerated compositing metabug.